Automating Webpage & Tweet Screencaptures

Originally posted on the Scholars’ Lab blog on January 30, 2019.

Do you have a list of webpage URLs for which you’d like screencaps (aka screenshots)?

(Know this tutorial fits your needs? Jump to the instructions.)

Maybe you study social media: you can capture all the data about a tweet using Documenting the Now’s tools and get a spreadsheet listing all of a hashtag’s tweets with text, file names for any image attachments, publication date, RT/like counts…

But maybe you’d also like to have screencaps of the webpage per each tweet as they appeared at a given time:

- keep an offline folder of all your faved tweets? (before time passes and some of them are deleted)

- record how tweets looked on Twitter.com at a given point in time, e.g. the avatars of folks who interacted with the tweet (using the avatar images current—at least as of the time of screencap—since people change these over time), or what metadata was viewable to the casual observer at a given time, as with this example tweet screencap:

Having screencaps of tweets can be useful for Twitter receipts, conducting a user study where you want to gauge folk’s reactions to seeing a tweet as it looked on Twitter.com (without needing to be online), or keep a folder of motivating tweets to refer to when you need encouragement.

This post will help you also automate taking screencaps from a list of URLs, by pointing you two tutorials plus some advice on combining these. This tutorial is written from a Mac user’s perspective.

My uses cases

I recently had two needs for a workflow automating screencaps:

-

Screencapping faved tweets (aka motivation): I used to “like” tweets where folks said useful things about my work, for later encouragement when I needed it. I wanted to switch to just screencapping these tweets as I saw them, saving the “like” function to indicate interest in others’ tweets, but that meant I need a way to get all those previous likes as screencaps. This workflow gave me a folder of over 2k screencapped tweets! (Now I just need to make a bot to show me a random one each time I log in…)

-

Figuring out how many of our posts were amplified by a DH aggregator: For an external review of DH at UVA, we’re drafting a report on Scholars’ Lab as a DH unit. As a small part of this, I wanted to include the number of times SLab staff or students have had a blog post highlighted by Digital Humanities Now. Searching the DH Now with a variety of search terms (e.g. Praxis, Neatline, variants of “Scholars’ Lab”, names of former staff) turned up a bunch of posts, but without opening each result in a browser to skim that post, I couldn’t be sure that these were all actually either SLab-authored or citing SLab work (vs. e.g. happening to use the word “praxis”). By automating screencaps of all the links my search turned up, instead of opening each link in a browser, I could use Preview to bulk-open screencaps and glance over the top of each webpage, seeing more quickly whether a post was related to SLab or not.

Credits

This is an extremely light repackaging of two sets of advice, for which I am grateful:

and

- Joshua Johnson’s 2011 post “AppleScript: Automatically Create Screenshots from a List of Websites”.

You may also wish to explore Documenting the Now’s tools for ethical preservation and research with social media, including the Tweet Catalogue to locate datasets of tweets using a given hashtag, and the Hydrator tool for turning those datasets back into a list of tweets and their metadata.

How to automate screencapping

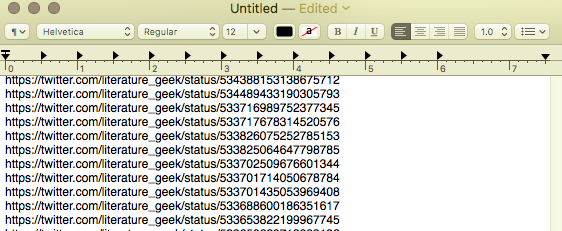

1. You’ll need a text document (plain text / .txt) with each URL you want to be screencapped listed on a separate line:

If you want to create a list of just your faved tweets, see this tweet by Ryan Bauman tweet to get just the liked tweets from your Twitter > Settings > “Your Twitter Data” download.

If you have a bunch of bookmarked URLs you want to screenshot (possibly in one bookmarks subfolder?), you can export your whole set of bookmarks from the browser, then edit the resulting HTML file (using search+replace or regular expressions; sorry, that’s a whole separate lengthy tutorial, but Doug Knox’s tutorial here’s a good place to get started understanding regular expressions) to strip it to just a list of the URLs you want to screencap.

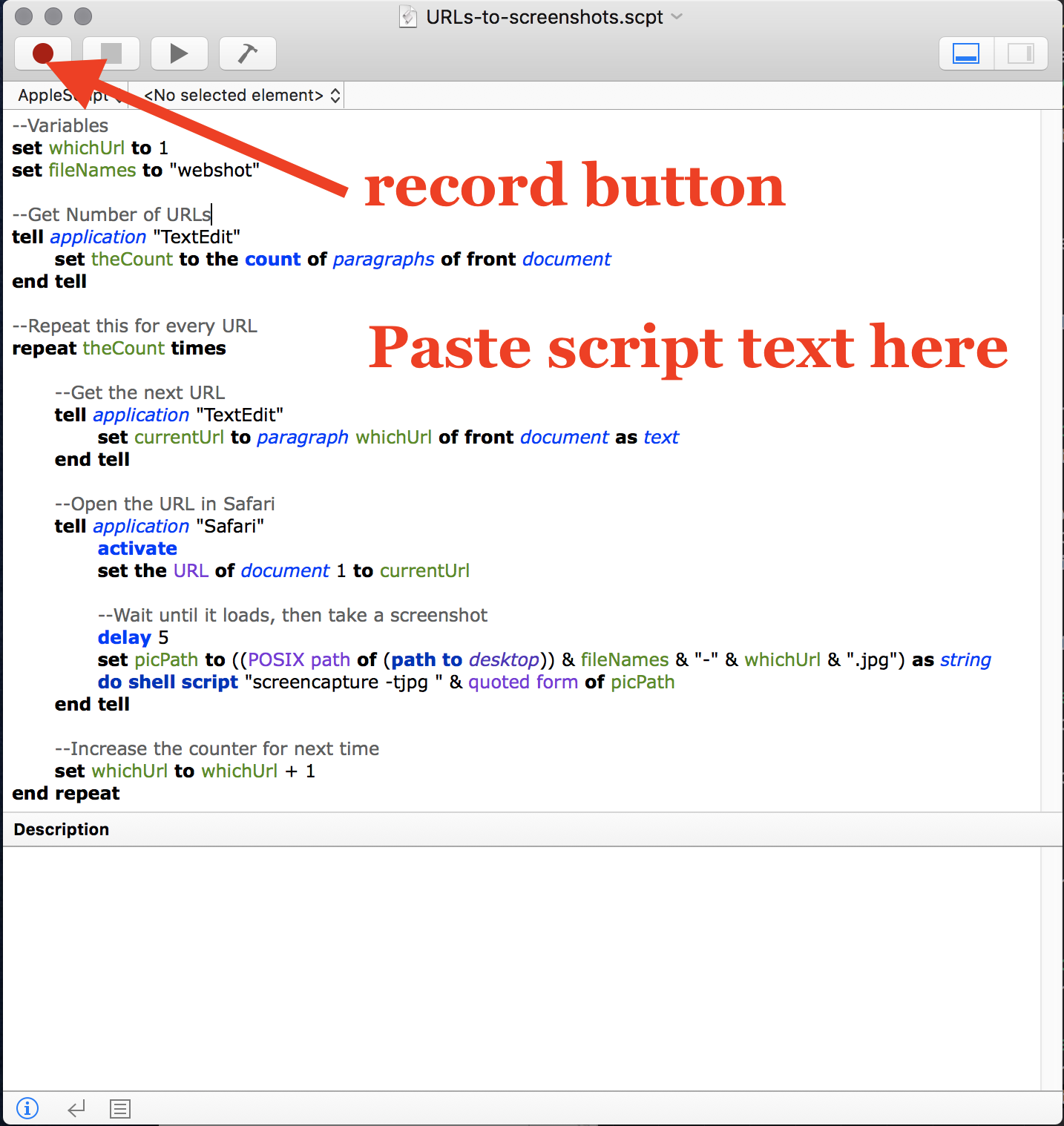

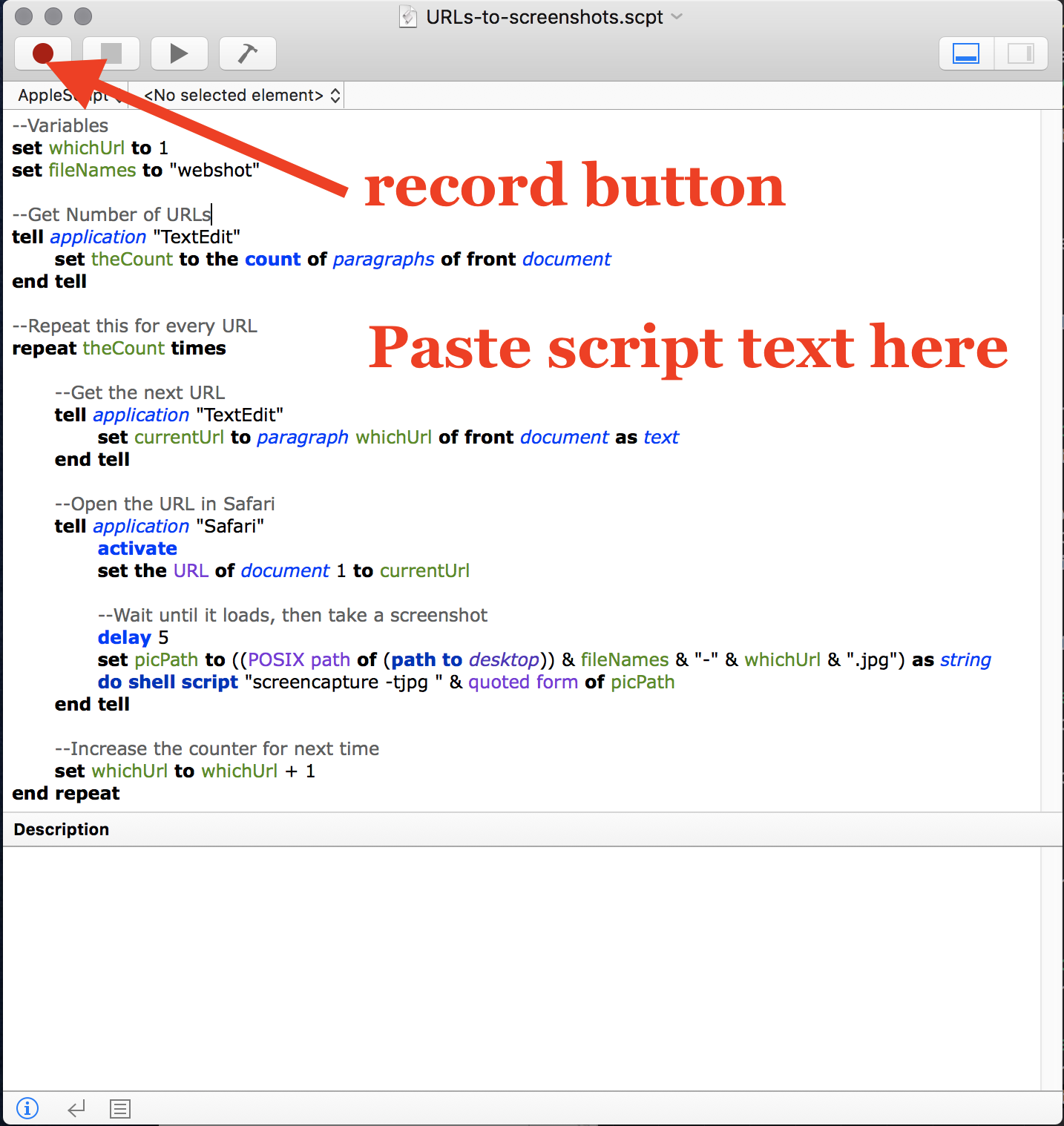

2. Next, we’ll use Joshua Johnson’s 2011 post “AppleScript: Automatically Create Screenshots from a List of Websites” to actually take the screencaps.

We’re going to use the Mac “Script Editor” tool to tell our computer to take that list of URLs, open each in Safari, take a screencap of that screen, and save it.

Open the Script Editor app (Applications > Utilities > Script Editor).

3. Grab the text from this page, which puts Joshua Johnson’s script in an easy-to-find place (to understand what the script does, read his post where he walks you through adding and adjusting the different pieces). Paste the text into the top text field:

If you have a slow internet connection or want to screencap pages on websites that load slowly, you may wish to find and adjust the piece of the script that says “delay 5”, to a larger number than 5 (this controls the time the script waits between directing safari to visit a given URL, and taking a screencap of whatever is then currently on the Safari screen).

4. Open the Applications > Safari browser, and drag its screen to be as large as possible. Depending on what level of zoomed-in/zoomed-out you usually use the Safari browser at, you may wish to adjust for the purpose of taking the screencaps; the screencaps will only capture what’s showing in the window you’ve opened (not stuff you’d need to scroll down to see).

5. Open the Applications > TextEdit app (not a different text editor, unless you want to figure out how to edit the script’s tell application "TextEdit" how to point to a different application). Paste in your list of URLs.

If you have other TextEdit windows/tabs, make sure that the one with the URL list is the topmost/frontmost.

6. You’re ready to take screencaps! You’ll want to do this when you’re okay not having access to your computer for a while, because the screenshots will be of whatever is at the front of your screen. If you step away from your computer, the script knows to leave a Safari browser window open at the front of your screen, take a screencap, then move to the next webpage in your list and screencap that. If you hide the Safari window or have other stuff open over it, you won’t get the screencaps you want. (I’m sure there’s a way to make this not the case, but I didn’t need to figure that out for my use cases.)

Back on the Script Editor, press the “red dot” record icon to begin automatically taking a screencap of each of the URLs in your list:

When the script finishes running, you’ll have screencaps of all the URLs!